Can Cryogenic Computing Solve the Data Center Energy Crisis? What the Research Actually Shows

Data centers consume 415 TWh yearly and efficiency has stalled. Here's what science actually says about cryogenic computing as a solution.

Cryogenic Computing Systems for Ultra-Low Energy Data Centers

The physics, the promise, and the real state of the technology

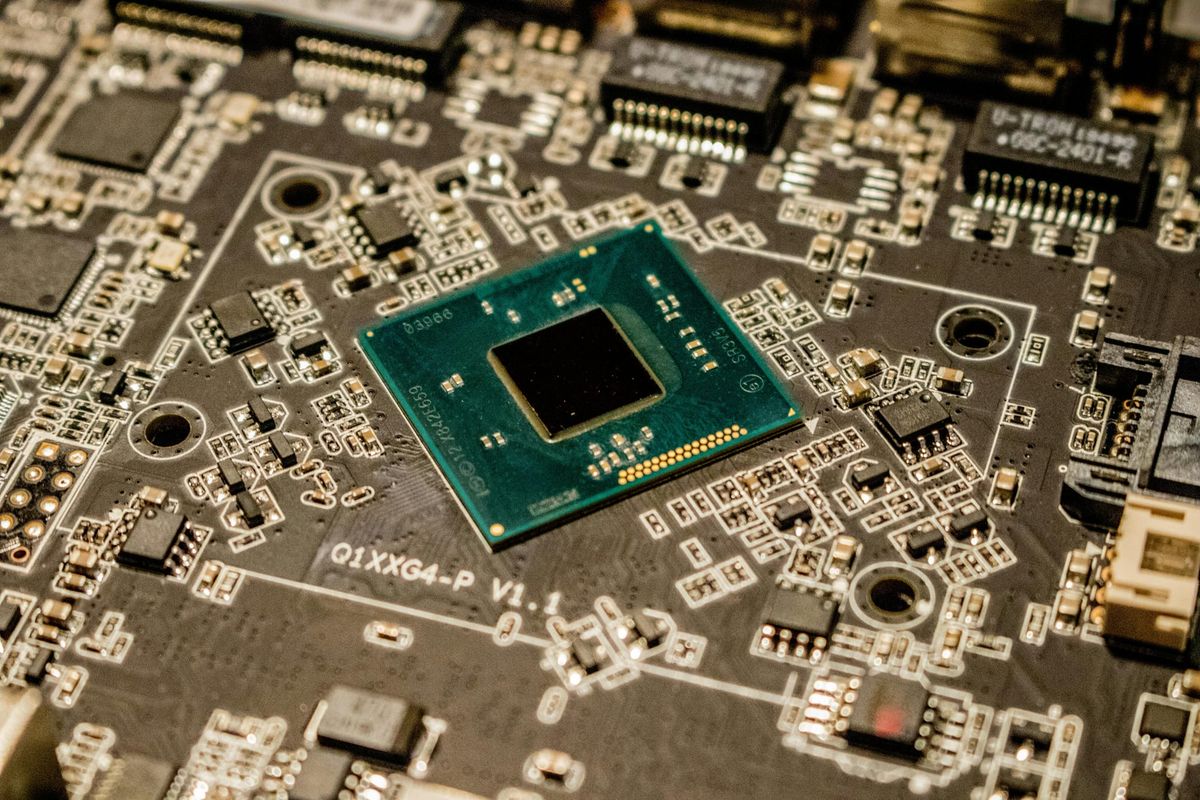

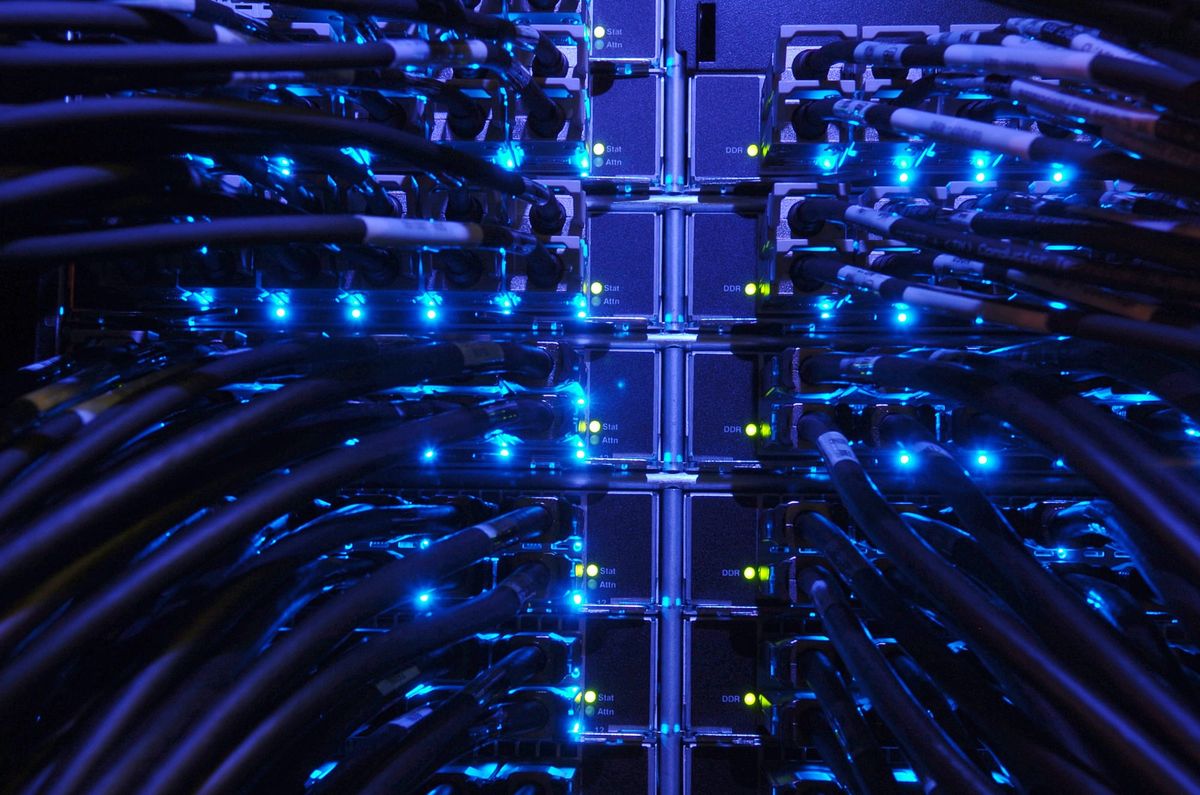

Data centers are approaching an energy inflection point. According to the International Energy Agency's April 2025 Energy and AI report, global data center electricity consumption stood at approximately 415 TWh in 2024 (about 1.5% of worldwide electricity use) and is projected to nearly double to 945 TWh by 2030, driven primarily by artificial intelligence workloads. In the United States alone, data centers are expected to consume more electricity by the end of the decade than all energy-intensive manufacturing industries combined, including steel, aluminum, cement, and chemicals.

Meanwhile, progress on efficiency has plateaued. According to the Uptime Institute's 2024 Global Data Center Survey, the industry average Power Usage Effectiveness (PUE), which is the ratio of total facility power to power actually used by computing equipment, sits at approximately 1.56, meaning roughly 36% of all energy drawn by a typical data center goes not to computation, but to cooling, power distribution, and other overhead. That number has barely shifted since 2020.

415 TWh - Global data center electricity use, 2024 (IEA

1.56 - Industry average PUE, 2024 (Uptime Institute)

~0.5% - Data centers' share of global CO₂ emissions (IEA / Carbon Brief)

Incremental improvements, such as better cooling design, liquid immersion, renewable energy procurement, are valuable but cannot alone close the gap between soaring demand and sustainability targets. This has renewed serious interest in a fundamentally different computing approach: cryogenic systems based on superconducting logic, which operate at temperatures near absolute zero and carry the potential to reduce energy per computation by several orders of magnitude compared to conventional silicon.

This article examines what cryogenic computing actually is, what the science supports, where research programs stand today, and which barriers still stand between the laboratory and the data center floor.

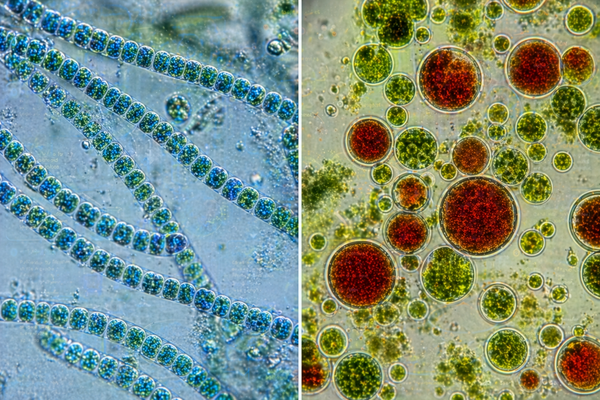

The Physics of Superconductivity

Superconductivity was first observed on April 8, 1911, when Dutch physicist Heike Kamerlingh Onnes cooled mercury to 4.2 Kelvin (-269°C) and found that its electrical resistance vanished entirely. The discovery, for which Onnes received the Nobel Prize in Physics in 1913, revealed a new quantum state of matter in which certain materials, when cooled below a material-specific critical temperature, conduct electricity with zero resistance and expel magnetic fields. As a side note, Heike Onnes was also the first person to liquify helium.

For computing, the implications are profound. Conventional silicon transistors generate heat as a direct consequence of electrical resistance; this waste heat is the primary driver of both the energy consumption and the cooling burden of modern data centers. Superconducting circuits based on Josephson junctions, which are quantum tunnel junctions made from a superconductor-insulator-superconductor sandwich, eliminate resistive losses entirely in their interconnects. As IARPA noted in its foundational Cryogenic Computing Complexity (C3) program documentation, Josephson junctions switch in approximately one picosecond, dissipate less than 10⁻¹⁹ joules per switching event, and communicate via small current pulses that travel over superconducting lines with near-zero loss. For reference, a typical CMOS transistor dissipates on the order of 10⁻¹⁵ joules per operation, or about roughly 10,000 times more.

The key insight: superconducting Josephson junctions operate at roughly 10,000× lower energy per switching event than conventional silicon transistors, not as a theoretical ceiling, but as a demonstrated laboratory measurement. The challenge is building complete computing systems around them.

Superconducting Logic Families: What Research Actually Shows

Rapid Single Flux Quantum (RSFQ) Logic

The most mature superconducting logic family is Rapid Single Flux Quantum (RSFQ) logic, which encodes binary information as the presence or absence of a single magnetic flux quantum. RSFQ circuits have been demonstrated operating at switching speeds exceeding 100 GHz. However, conventional RSFQ requires continuous DC bias currents distributed through resistors, which dissipate static power that can exceed the dynamic switching power by more than tenfold, a significant efficiency limitation identified in the research literature.

Energy-Efficient Variants: ERSFQ and RQL

To address RSFQ's static power problem, researchers developed Energy-Efficient RSFQ (ERSFQ), which replaces resistive bias with inductive current limiters, eliminating static dissipation while keeping the same cell library. A 2022 review published in ResearchGate's survey of superconducting computing technology confirmed that ERSFQ achieves bit-switch energy of around 2 × 10⁻¹⁹ joules with zero static power dissipation. Reciprocal Quantum Logic (RQL), developed at Northrop Grumman, takes a different approach using AC power and bidirectional pulses, also achieving near-zero static dissipation. Under IARPA's C3 program, MIT Lincoln Laboratory successfully fabricated a shift register containing 810,000 Josephson junctions on a 1 cm × 1 cm chip, at the time, a world record in junction count for SFQ circuits.

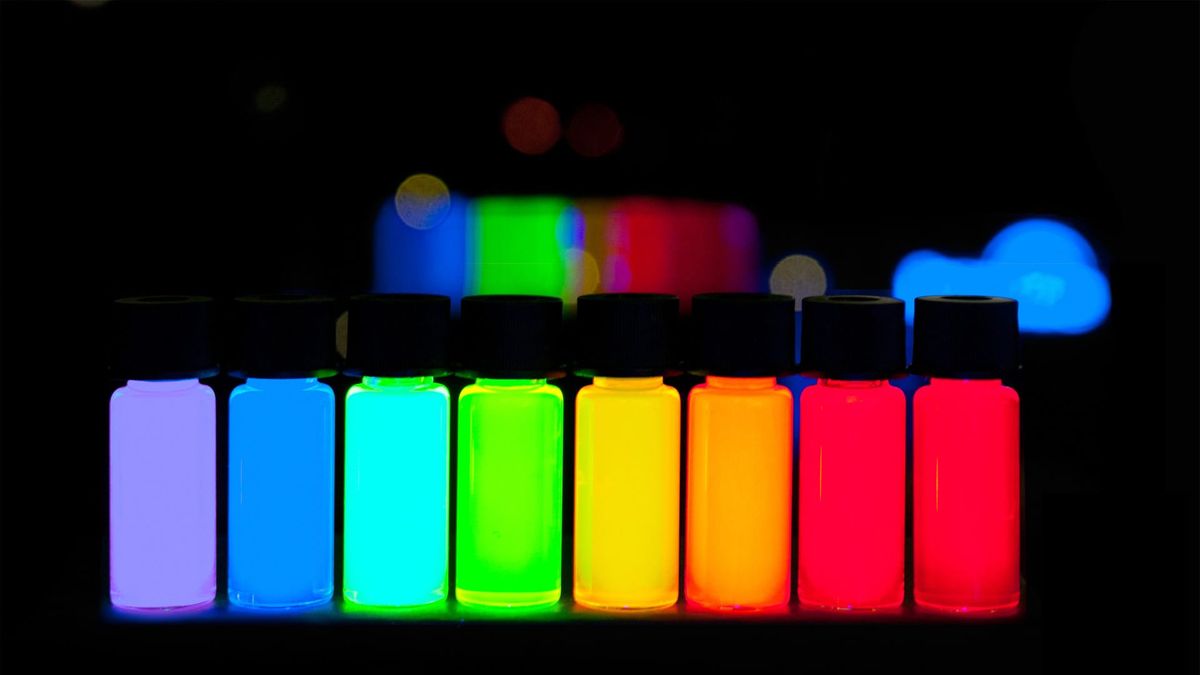

Adiabatic Quantum Flux Parametron (AQFP) Logic

The most energy-efficient superconducting logic family demonstrated to date is Adiabatic Quantum Flux Parametron (AQFP) logic, developed primarily at Yokohama National University. AQFP uses AC excitation currents as both clock and power supply, enabling near-reversible, adiabatic switching. According to a 2022 tutorial review published in IEICE Transactions on Electronics (Takeuchi et al.), a demonstrated 8-bit carry-look-ahead adder showed energy dissipation of 1.4 × 10⁻²¹ joules, equivalent to 24kBT, and approaching the thermodynamic Landauer limit. A 2019 paper in Scientific Reports (Chen et al., DOI: 10.1038/s41598-019-46595-w) demonstrated that AQFP can achieve an energy-delay product near the quantum limit. In January 2021, a 2.5 GHz AQFP-based prototype processor called MANA (Monolithic Adiabatic iNtegration Architecture) was reported to achieve energy efficiency approximately 80 times better than traditional semiconductor processors, even after accounting for cooling overhead.

The Cooling Challenge: Infrastructure Realities

The efficiency gains of superconducting logic only translate to real-world benefits if the cooling infrastructure required to reach cryogenic temperatures is itself tractable. This is one of the most significant practical challenges.

Superconducting logic based on niobium (the most common fabrication material) requires operation at approximately 4 Kelvin, typically achieved with liquid helium or closed-cycle dilution refrigerators. Operating at 77 Kelvin (the boiling point of liquid nitrogen, which is abundant and inexpensive) is possible for some cryogenic CMOS applications, but superconducting SFQ circuits require the colder 4 K regime.

The critical question is whether the energy savings in computation outweigh the energy cost of refrigeration. Research suggests the answer can be yes; but with important caveats. IARPA's C3 program, which ran through the mid-2010s and aimed to establish superconducting computing as a practical technology, projected that superconducting supercomputers could potentially achieve 1 PFLOP/s for about 25 kW and 100 PFLOP/s for about 200 kW including the cryogenic cooler. As program manager Marc Manheimer stated: "Computers based on superconducting logic integrated with new kinds of cryogenic memory will allow expansion of current computing facilities while staying within space and energy budgets, and may enable supercomputer development beyond the exascale." These remain projections rather than demonstrated results for complete systems.

A 2024 review in ScienceDirect on cryogenic electronics for high-performance computing noted that cryogenic superconductive systems have been shown to consume around 80 times less energy compared to room-temperature 7nm CMOS technology, even when including the helium cooling cost. However, this comparison was made at the component level, and the challenge of building complete computing systems; including memory, interconnects, and input/output at scale remains unsolved.

The Memory Problem: The Field's Central Bottleneck

If there is one challenge that experts consistently identify as the primary obstacle to practical cryogenic computing, it is memory. A 2023 review in Nature Electronics (Alam et al., DOI: 10.1038/s41928-023-00930-2) summarized the problem clearly: the lack of compatible cryogenic memory technology that can operate at 4 K or lower is the central barrier to practical and scalable superconducting systems.

Conventional DRAM has been tested at cryogenic temperatures and shown to function at 77 K with manageable error rates. But integrating room-temperature memory with a 4 K processor requires crossing a significant thermal boundary, introducing latency and complexity. The ideal solution, a fast and dense, energy-efficient memory that operates natively at 4 K and interfaces directly with SFQ circuits does not yet exist at scale. IARPA's C3 program explored several candidate technologies including JMRAM (Josephson Magnetic RAM), spin-Hall-effect memory, and conventional MRAM adapted for cryogenic operation. NIST researchers demonstrated a first integration of a superconducting switch controlling a cryogenic memory element as part of the C3 program; a meaningful step, but far from a deployable memory subsystem.

Recent work has made incremental progress. A 2025 paper in Nature Materials from HKUST (Liu et al., DOI: 10.1038/s41563-024-02088-4) demonstrated cryogenic in-memory computing using magnetic topological insulators, achieving simulated neural network performance of 724 TOPS/W at 2 K, which is an encouraging result for AI-adjacent workloads. But as researchers from the nanoscale computing community have noted, superconducting approaches "presently suffer from several key drawbacks that make their use in large-scale control circuits difficult," including large device footprint, lack of native memory elements, sensitivity to magnetic flux trapping, and a limited ecosystem of electronic design automation tools.

Quantum Computing: The Nearest-Term Driver

The most immediate real-world application of cryogenic electronics is in quantum computing control. All leading superconducting qubit platforms (IBM, Google, IQM, and others) inherently require sub-Kelvin operating environments. A critical and growing challenge is how to interface the qubits with classical control electronics without running thousands of cables from millikelvin temperatures to room temperature, each of which introduces heat and limits scalability.

This has driven significant investment in cryogenic CMOS, conventional silicon circuits engineered to operate at 4 K, co-located near the quantum processor. IBM presented work on cryogenic qubit control circuits at MRS Spring 2023, demonstrating RF arbitrary waveform generators in commercial 14nm FinFET technology with low power dissipation at cryogenic temperatures. Google, Intel, and the University of Sydney have all demonstrated cryogenic CMOS control boards operating at or near millikelvin temperatures adjacent to qubit chips. The synergy is real: the same cooling infrastructure required for quantum computers can serve as a testbed and early deployment environment for classical cryogenic logic.

Reversible Computing: Addressing the Root Cause New

Cryogenic hardware addresses the symptoms of computing's energy problem, resistive losses, thermal noise, leakage currents, but a parallel line of research targets the root cause itself. Landauer's principle, first articulated by IBM physicist Rolf Landauer in 1961, established that every irreversible computational operation (every time a bit is overwritten and its previous state permanently lost) necessarily dissipates a minimum amount of energy as heat. This is not an engineering limitation but a thermodynamic one: information erasure increases entropy, and entropy increase requires energy. Today's CMOS processors erase information on every clock cycle across billions of transistors, and they do so at energy levels more than a thousand times above the Landauer minimum, as reported by IEEE Spectrum in 2025. Reversible computing sidesteps this constraint entirely by designing circuits that never permanently destroy information. Instead, intermediate computational states are preserved and then "decomputed" once no longer needed, as theorized by IBM's Charles Bennett in the 1970s. In principle, a fully reversible computer could perform arbitrarily complex calculations while dissipating arbitrarily little energy. In practice, researchers like Michael Frank, who left Sandia National Laboratories in 2024 to commercialize reversible computing through startup Vaire Computing, argue that even partial reversibility could yield dramatic efficiency gains, and that AQFP superconducting logic is one of the most promising physical substrates for it, since its adiabatic switching mechanism already approximates thermodynamically reversible operation. The convergence of reversible logic design with cryogenic superconducting hardware thus represents the field's most theoretically compelling long-term pathway: attacking both the physics of heat generation and the information-theoretic source of that heat simultaneously.

Reversible computing and cryogenic superconducting logic are complementary, not competing, approaches. AQFP's adiabatic switching is already a partial implementation of reversible principles, making the two technologies natural allies in the pursuit of ultra-low-energy computation.

Where the Technology Actually Stands

It is worth being explicit about what has and has not been demonstrated.

What is real and demonstrated:

- Josephson junction switching at sub-picosecond speeds with <10⁻¹⁹ J per operation (IARPA C3 program documentation; MIT Lincoln Laboratory)

- ERSFQ circuits with zero static power and bit-switch energy around 2 × 10⁻¹⁹ J (APL Quantum, 2025)

- AQFP circuits operating at 24kBT energy dissipation, approaching Landauer's limit (Takeuchi et al., IEICE 2022)

- The MANA processor achieving ~80× better energy efficiency than CMOS, including cooling (Wikipedia / primary literature, 2021)

- 810,000-junction SFQ shift register on a 1 cm² chip (MIT Lincoln Laboratory / DTIC, C3 program)

- Cryogenic DRAM operating at 77 K with low error rates (Tannu et al., 2017)

- Cryogenic in-memory computing at 724 TOPS/W simulated (Liu et al., Nature Materials 2025)

What remains undemonstrated at scale:

- A complete superconducting computing system (processor + memory + I/O) operating at useful computational scales

- Exaflop-class cryogenic performance - this does not yet exist; it remains a long-range projection

- Cost-competitive cryogenic data center deployments outside of highly specialized or government research contexts

- Dense, fast, native 4 K memory compatible with SFQ logic at meaningful capacity

The Road Ahead

The energy crisis facing data centers is real and worsening. The physics of superconducting computing is genuinely compelling; the efficiency advantage over silicon at the device level is not theoretical but measured. The IARPA C3 program established that a superconducting computing path to exascale performance at a fraction of the power of conventional systems is physically plausible.

But "plausible" and "demonstrated" are different things. The field currently sits at a critical juncture: component-level results are impressive, but system-level integration, especially memory, remains the central unsolved problem. The near-term growth of cryogenic electronics will likely be driven by quantum computing infrastructure, where the cooling systems already exist and the incentives to develop cryogenic control logic are immediate. Classical superconducting HPC, while promising, is likely a decade or more from data center deployment at scale.

For strategists and technology evaluators, the honest framing is this: cryogenic computing is not a near-term solution to the data center energy problem, but it may be one of the most important long-term ones. The physics are on its side. The engineering challenges are substantial but not insurmountable. The question is not whether it will work; but when.

Sources & Further Reading

International Energy Agency. Energy and AI. IEA, Paris, April 2025. iea.org

Uptime Institute. Global Data Center Survey 2024. Uptime Institute, July 2024.

IEA. Data Centres and Data Transmission Networks. IEA energy system tracker, updated 2023. iea.org

Holmes, D.S., Ripple, A.L., & Manheimer, M.A. "Energy-Efficient Superconducting Computing - Power Budgets and Requirements." IEEE Transactions on Applied Superconductivity, vol. 23, no. 3, p. 1701610, 2013. DOI: 10.1109/TASC.2013.2244634

Manheimer, M.A. "Cryogenic Computing Complexity Program: Phase 1 Introduction." IEEE Transactions on Applied Superconductivity, vol. 25, no. 3, 2015. DOI: 10.1109/TASC.2015.2399rather

IARPA. Cryogenic Computing Complexity (C3) Program. iarpa.gov. iarpa.gov/research-programs/c3

Takeuchi, N., Yamae, T., Ayala, C.L., Suzuki, H., & Yoshikawa, N. "Adiabatic Quantum-Flux-Parametron: A Tutorial Review." IEICE Transactions on Electronics, vol. E105-C, no. 6, June 2022.

Chen, O. et al. "Adiabatic Quantum-Flux-Parametron: Towards Building Extremely Energy-Efficient Circuits and Systems." Scientific Reports 9, 10514 (2019). DOI: 10.1038/s41598-019-46595-w

Alam, S., Hossain, M.S., Srinivasa, S.R., & Aziz, A. "Cryogenic Memory Technologies." Nature Electronics, vol. 6, pp. 185–198 (2023). DOI: 10.1038/s41928-023-00930-2

Liu, Y., Shi, S., Liu, J., Liu, C., Wang, J., Zhang, W., Shao, Q. et al. "Cryogenic in-memory computing using magnetic topological insulators." Nature Materials, 2025. DOI: 10.1038/s41563-024-02088-4

Tolpygo, S.K. et al. "IARPA C3 Program: SFQ Fabrication at MIT Lincoln Laboratory." DTIC / MIT Lincoln Laboratory, 2019. apps.dtic.mil

Resch, S. & Cilasun, H. "Cryogenic PIM: Challenges & Opportunities." IEEE Computer Architecture Letters, vol. 20, no. 1 (2021).

van Delft, D. & Kes, P. "The Discovery of Superconductivity." Physics Today, vol. 63, no. 9 (2010). DOI: 10.1063/1.3490499

IBM Research. "Cryogenic Electronics for Quantum Computing: From Materials to Devices." MRS Spring 2023 presentation. research.ibm.com